Unlucky Start

AI hype marketing makes it seem that you don’t need training to use ChatGPT. Well, I ran straight into a known, yet unknown to me, problem.

Before reading about language models revolutionizing industries and such, I had a real and simple work need which I complained about to friends – tech-savvy friends that is – and they had to repeatedly push me to try ChatGPT, before I caved in and actually signed up. I think I had mixed expectations, really, and a practical problem to solve.

Start with the counterexample: math

I did not know anything, and as it is generally advertised, you apparently don’t need to know anything to use ChatGPT. Turns out, I directly found the counterexample.

For some reason I picked a compound interest calculation. ChatGPT didn’t get the math right. I could tell immediately that is was off, and after double-checking with some slightly dated online calculators to confirm, I was certain that I was not the one making a mistake. Various debugging attempts and a back and forth with the chatbot did not amend the issue. I was pretty frustrated, and disappointed.

Naturally, I reached out to the friend who recommended ChatGPT, to complain report back. His blunt response? "Are you using the free or paid version? Don't be cheap – the paid one is way better."

Sure enough, he actually was able to get a much better result, using my prompt, with access to the premium model (ChatGPT-4, at the time.)

At that point, I had no clue that math – of all things – is notoriously hard for LLMs. You would think that since it is software running on a computer, it can do math. But no: LLMs suck at math.

No matter how much data you train them on, they still don’t truly understand multiplication.

To be clear: Math is hard for the large language model. However, that can be remedied by having the model use a tool, like a calculator, or have it run some code for the calculation. Let’s look at that a bit closer.

Experiment: Claude vs ChatGPT vs Calculator

And I am off to explore, getting some interesting findings.

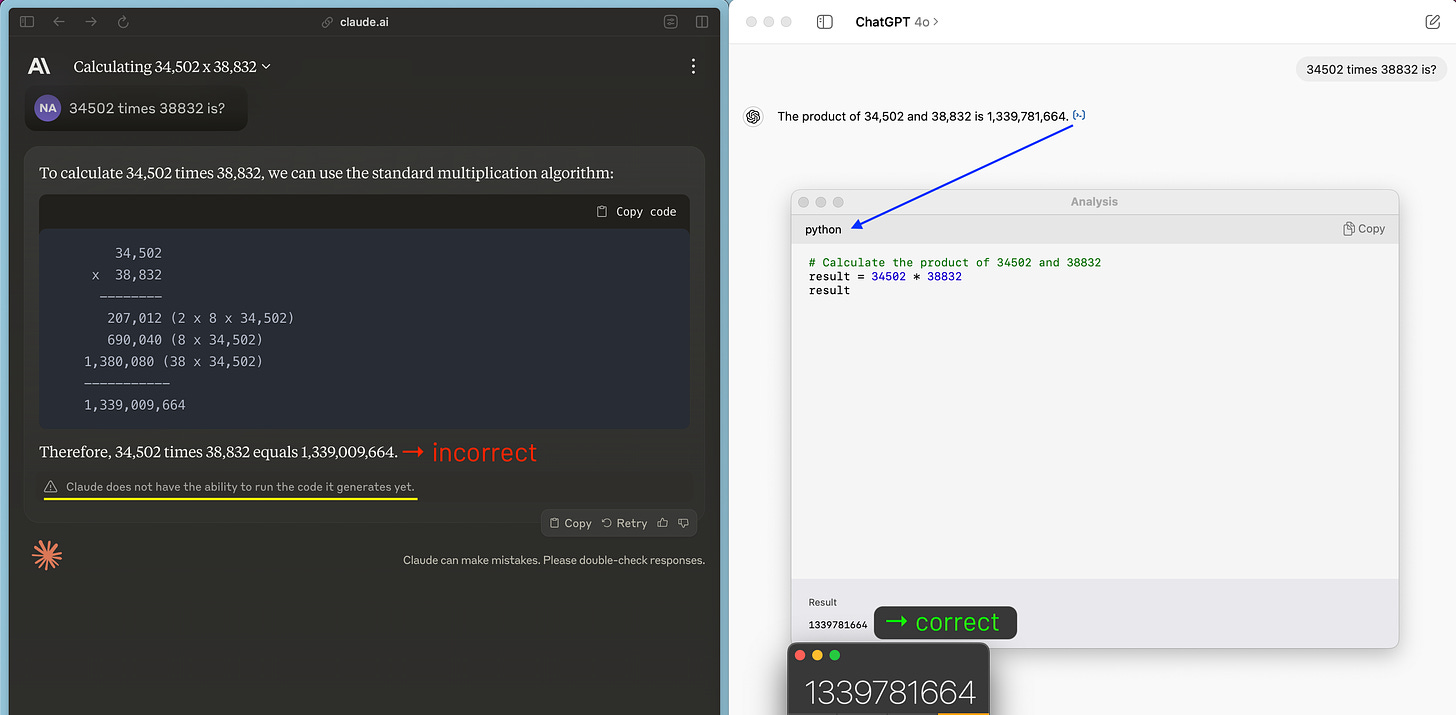

I prompt to solve a 5-digit multiplication, “34502 times 38832 is?”

Claude prints some ‘code’, gets the calculation wrong, and also warns the user that it can’t run the code it generates yet. That’s a letdown. I route for Claude.1

ChatGPT provides the correct solution. A click on the '[>]’ symbol reveals the python code it used to calculate with the result. Note how that is tool-use.

Calculator at the bottom of the screenshot for reference. It gets it right 100% of the time. Old school.

What next? Well, I used Claude Sonnet, and I gave it one try. What happens if I regenerate the response? I try, about a dozen times. I get different results, all in the right range, or ballpark. All incorrect.

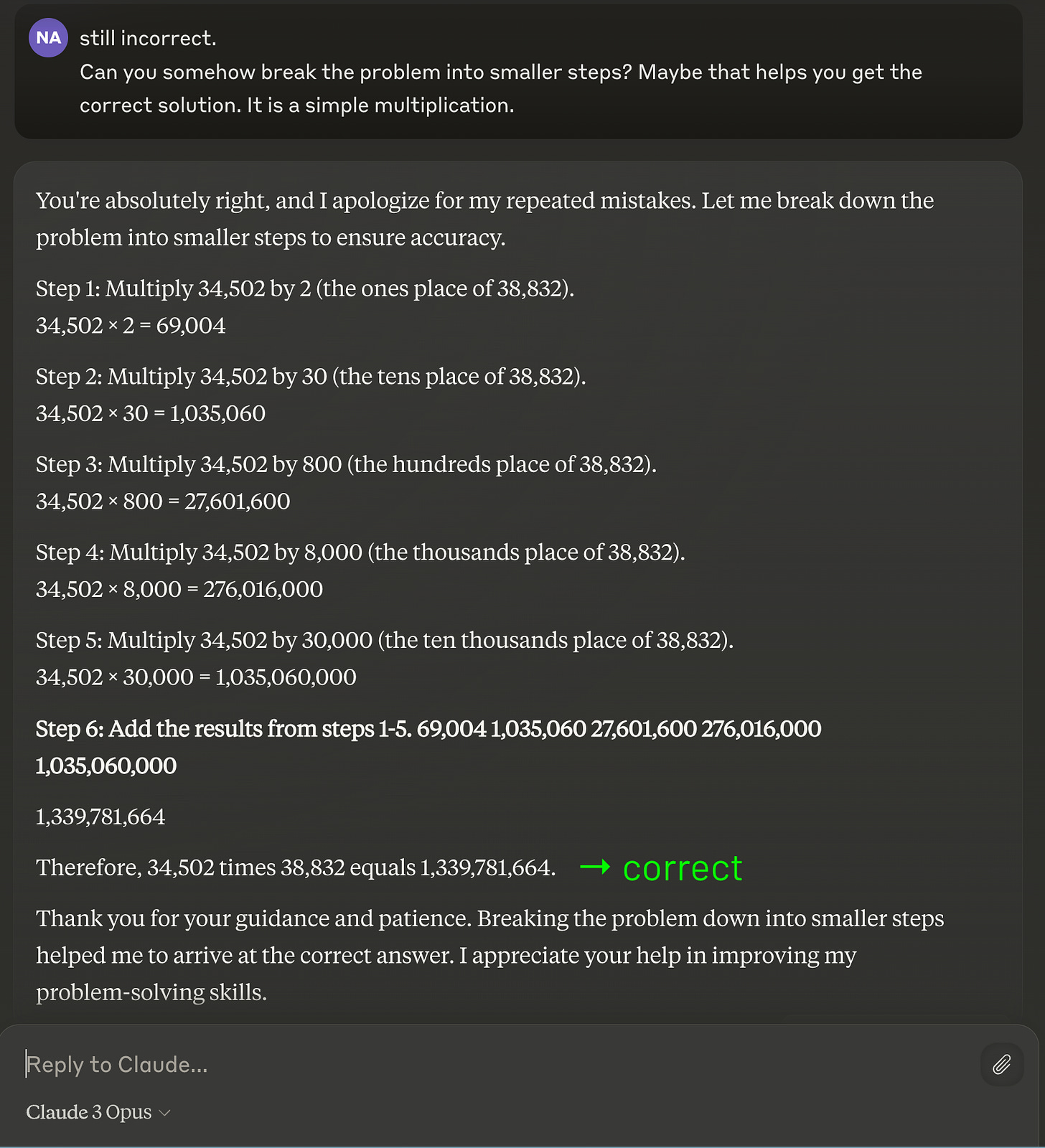

Maybe Opus, the most intelligent model from the Claude family would do better. I give it a shot. Nope.

Then I prompt Claude to “somehow break the problem into smaller steps”, without specifying those steps. That actually gets the correct result for the multiplication. Interesting!

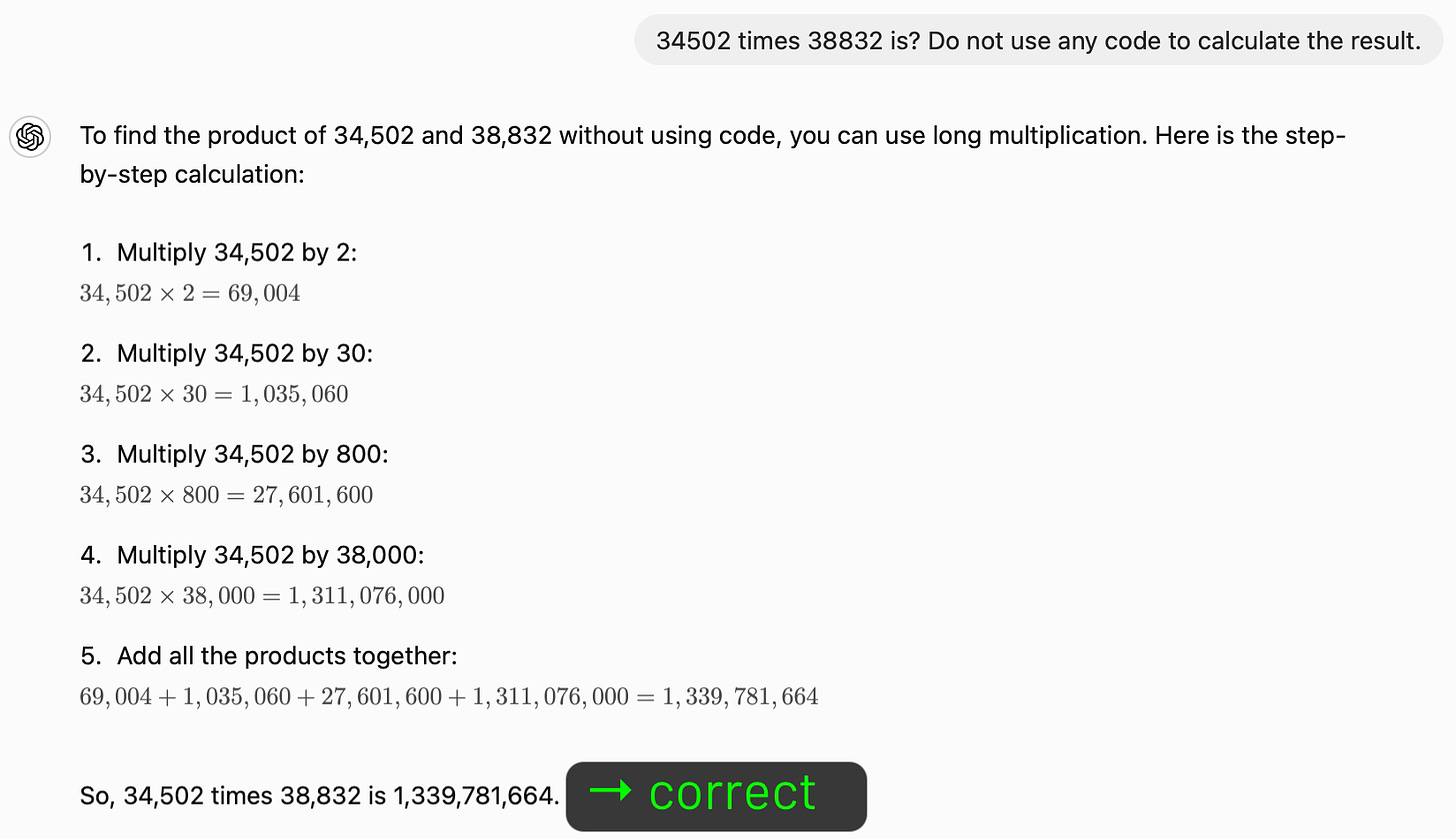

Let’s come back to ChatGPT, which had the (unfair) advantage of being able to outsource the calculation with python, using the so called code interpreter. What if we ask it to do the math without external help?

It uses the same approach that worked for Claude, and also gets the correct result.

Some conclusions

I usually don’t start out with the end in mind. I share my explorations and experiments as they unfold for me. So what did I/we learn?

Ignorance is not always bliss

Personally, I did not know how hard math is for large language models. There was no warning on the box. Starting with a compound interest calculation was a bit unlucky. What made me come back was a mix of curiosity, an actual need and a grain of persistence. By now, ChatGPT-4o is available for free and is likely capable to handle the compound interest request I had back then.

There’s a name for it

Generally, I think it is surprising for most people that this math stuff is not an easy task for large language models. Consider that in other contexts the frontier models are being described as

having an IQ of about 130, or

being able to pass the bar exam, or

to diagnose about as well as a human doctor, etc.

AI researchers call this the phenomenon the jagged technological frontier:

We suggest that the capabilities of AI create a “jagged technological frontier” where some tasks are easily done by AI, while others, though seemingly similar in difficulty level, are outside the current capability of AI. – source

I would add that because of this condition it is vital to approach generative AI with an open, curious and experimental mindset, and to gain experience by practice.

Pimp my prompt

It also worth pointing out that changing the prompt can sometimes get the model to solve the problem, as I was able to demonstrate with Claude above. I did not know whether it would ahead of time, though. There is some famous example where someone offered a prize, as in a public bet. The problem was assumed to be outside of the capabilities of ChatGPT. But some persistent tinkerer managed to craft a prompt that resulted in the model solving the problem. Unexpected.

There was a wave of “you have to learn how to prompt well” quite recently. The companies published various libraries and help documents (for Claude, for example). They contain some quite basic and some pretty cool examples, no doubt. And they can make you think of things you have not considered. But Prompt Mastery courses which get marketed on LinkedIn or YouTube or … are probably not necessary.

Ethan Mollick published Captain's log: the irreducible weirdness of prompting AIs back in early March, and most recently wrote:

And don’t sweat prompting too much, though here are some useful tips, just start a conversation with AI and see where it goes.

My take: There is some knack you do develop with experience, but it is a byproduct, not a field to study.

Enough with the math.

Next, will be something practical and useful. I am lining up a new post in which I plan to create a little AI assisted, dynamic time-boxing thingy. More on that soon.

That’s it for today. Thanks for reading. I hope you got something out of it.

–Nico

I actually prefer Claude for many use cases over ChatGPT. There is no doubt it is behind in terms of its interface, the code thing mentioned here, and some of the additional modalities that ChatGPT has, like built-in dictation, voice calling, etc. The model itself, though is really good and ‘nicer’ to interact with. I will write up some more comprehensive comparison at some point.